$30 billion in AI investment, up in smoke.

That’s what MIT found when they studied more than 300 publicly disclosed AI deployments. 95% of the organizations reported zero measurable return.

The researchers spent six months conducting and analyzing interviews with leaders across multiple industries. What they found surprised them.

The study’s authors call it The GenAI Divide. On one side, organizations see millions in operational impact. Everyone else is on the other side still running pilots and getting no results.

Why Does AI Fail in Revenue Operations?

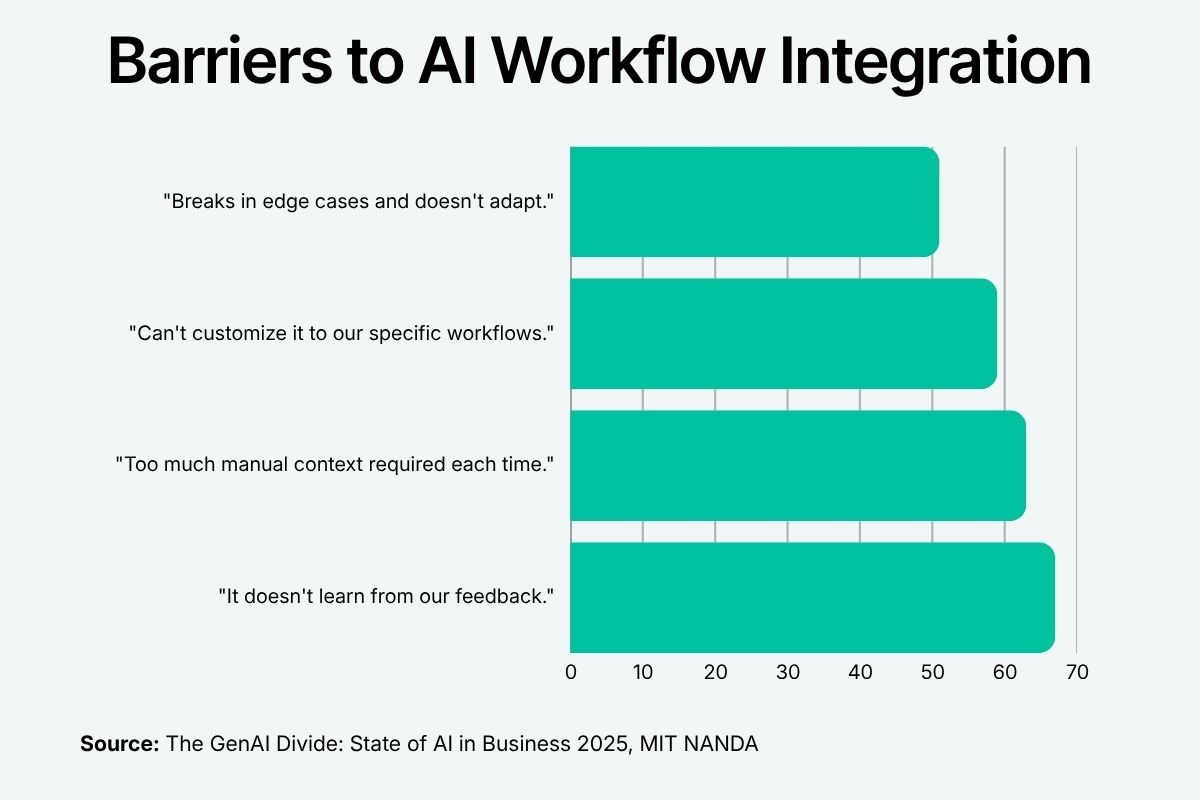

MIT identified 4 common barriers to AI deployment:

- Lack of learning

- Too much manual context required

- Lack of customization

- Lack of adaptability

They saw tools like ChatGPT and Copilot improved individual productivity. But they didn’t touch the workflows.

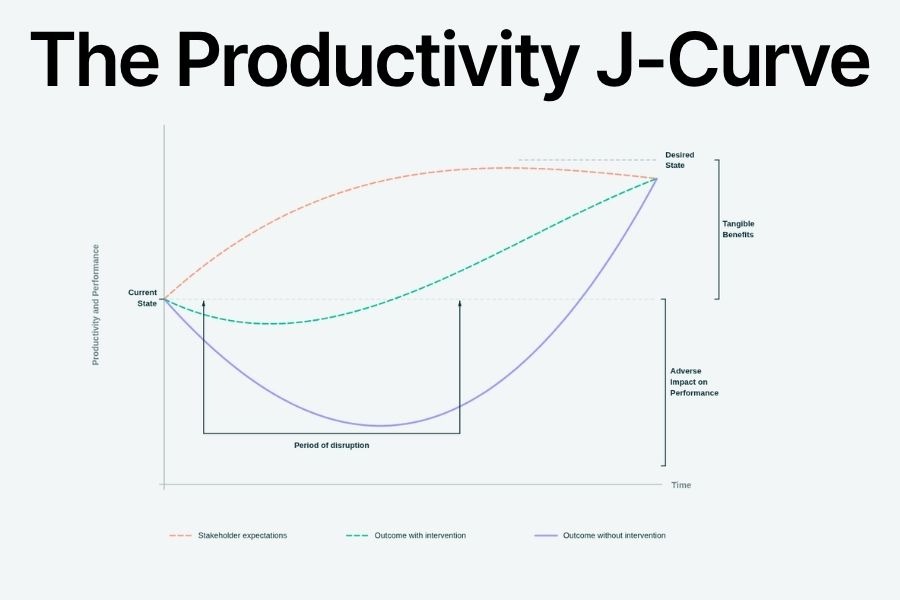

Economics has a name for this phenomenon: the Productivity J-Curve. General-purpose technologies like GenAI require investments in process redesign, workflow integration, and organizational adaptation. Without these interventions, organizations don't see desired results in early metrics. So they pull the plug before the curve turns.

Imagine dropping a skilled RevOps analyst into a room with no CRM access, no training on your sales process, and no clarity on what decisions they're authorized to make. Then you ask them to create an accurate. Would you expect good results in that environment?

I’ve seen this exact environment derail dozens of AI pilots. After building RevOps systems for years, I’ve found that the solution comes down to three core AI infrastructure principles:

1. Constraints: Give the Sandbox Some Boundaries

AI needs constraints to deploy effectively in revenue operations. The MIT research found that the tools which crossed the GenAI Divide shared one defining characteristic: they were process-specific and tightly integrated. In other words, they had a defined job with clear rules for how to do it.

In RevOps, that means defining three things before any deployment:

- The data layer: specify which CRM fields, call summaries, and email threads the AI can see. An AI with access to everything produces confident outputs based on noise. An AI with access to the right signals produces reliable ones.

- The playbook logic: tell the AI what steps to take. In a RevOps analysis, the AI needs guidelines for what counts as a stale deal, what triggers a risk flag, and what qualifies a stage advance. If the AI is working without defined criteria, it won’t follow a predictable process—so it won’t deliver predictably accurate results.

- The authorization model: what the AI can do autonomously, what requires a single-click approval, and what requires a human decision. This is the line between a tool that accelerates execution and one that creates new cleanup work.

When revenue teams skip this step, the AI starts operating on bad context. It flags deals that aren't at risk. It misses ones that are. Reps stop trusting it. Managers override it. Within 90 days they dismiss it as background noise.

Ask 3 questions to diagnose a constraint problem:

- What data does the AI access?

- What playbook does it follow?

- What decisions is it authorized to make?

2. Visibility: Show Your Work

If you can’t see or understand what an AI is doing, you can’t trust it. The MIT report documented a pattern they called the shadow AI economy. Employees at over 90% of companies surveyed were already using personal AI tools (like Claude and ChatGPT) to automate significant portions of their jobs, usually without IT approval. These tools generally explain their actions, but those explanations aren’t visible to the wider organization.

A working RevOps implementation looks like this: every AI-generated output shows its reasoning, e.g., “deal flagged because of no activity in 18 days, close date unchanged, no second contact engaged.” Every automated action has an audit trail that shows what the AI did, when, and based on which data state. Reps and managers grasp the logic without a documentation deep dive.

As AI takes more consequential actions in revenue workflows (like updating CRM records, queuing follow-ups, and reclassifying deal stages) the ability to audit what happened and why becomes a requirement, not a best practice. You can't manage what you can't see, and you can't scale what your team doesn't trust.

Run this diagnostic test: if your AI writes a follow-up for an at-risk deal and a rep asks "why did this get flagged," how many clicks does it take to answer that question? If the answer is more than one, you have a visibility problem.

3. Measurability: Prove the Impact

AI that gets measured gets managed. The MIT report found that investment in AI is heavily concentrated in sales and marketing, with roughly 70% of AI budget allocation across surveyed organizations. Why? Their outcomes are easiest to measure; they’re the closest to revenue.

Even so, connecting AI to those measurements is another challenge. One VP described the problem precisely: "If I buy a tool to help my team work faster, how do I quantify that impact? How do I justify it to my CEO when it won't directly move revenue or decrease measurable costs?"

AI is doing the work. Prep time is down, follow-up rates are up, and CRM records are more complete. But no one defined the expected outcomes and connected them to the AI activity. The gains are invisible to the people who control the budget. The pilot gets pulled because it couldn't prove the impact.

Before any AI deployment, answer three diagnostic questions:

- What specific metric moves if this works?

- What's the baseline today?

- What does a 90-day result look like?

If any of those are blank, the pilot isn't ready.

The Bottom Line: Build the Systems Around the AI

The GenAI Divide is real: it separates the companies building support systems from the ones that aren’t. Run the audit. Find the bottlenecks. Build the solution into your operations. We built Chief around these core principles to make every revenue workflow more predictable and reliable.

Try Chief free today.

.svg)