AI projects keep failing.

Gartner projects that over 40% of agentic AI projects will be canceled by the end of 2027. The share of companies abandoning most of their AI initiatives jumped from 17% in 2024 to 42% in 2025.

The technology works. But organizations are deploying it without understanding which tasks it's actually suited for.

This creeps up on revenue leaders. It manifests as a forecast that looks clean…until finance asks why the commit roll-up was off by $2M. By that point, the agent has often run the same flawed logic hundreds of times.

The Core Problem: Using Probabilistic Tools for Deterministic Work

There’s one distinction most revenue teams miss when they deploy AI: probabilistic work vs. deterministic work.

An LLM is a probabilistic model. It generates the most statistically likely output given a set of inputs—in other words, a sophisticated pattern-based guess. That guess is usually very good. It is never guaranteed to be identical twice.

Revenue operations, by design, are deterministic. The same inputs must produce the same output, every time, with a clear explanation. When a deal moves from Stage 3 to Stage 4, the rules governing that transition need to behave identically whether it's Tuesday morning or Friday afternoon, whether the rep is a top performer or a new hire. Forecast accuracy, pipeline hygiene, territory splits, quota calculations. These are deterministic processes.

What happens when you hand deterministic work to a probabilistic tool?

You don't get obvious failures. You get slow, invisible, confidence-eroding drift. Numbers that were right last week are slightly off this week. Trends that seemed solid turn out to have been shaped by variance in the LLM's outputs—not actual changes in the business.

This is what AI drift looks like. And too many revenue leaders don't recognize it until it's already in the board deck.

What AI Drift Looks Like at Scale

Let’s look at an example from outside the RevOps world. This comes from a company that trusted an AI model to do deterministic financial work without the guardrails to back it up.

In 2021, Zillow shut down its home-buying division after its AI pricing algorithm made catastrophic valuation errors across thousands of properties. The system—built on the well-known Zestimate model—was designed to predict home values accurately enough to guide Zillow's purchase decisions in real time. The algorithm consistently overestimated property values during a period of market volatility, buying homes at above-market prices it couldn't later recoup. Total losses exceeded $500 million, and Zillow laid off 25% of its workforce.

The root cause wasn't that the model was poorly built. It simply couldn’t reliably forecast short-term home values within the narrow margin the business required. And nobody had put hard guardrails in place to catch it when it drifted. The outputs looked reasonable. They passed review. The errors only became visible when the properties couldn't be resold profitably.

This is exactly the failure mode that threatens RevOps teams today, just at a smaller scale per incident and harder to see. Zillow's loss was concentrated and visible. Revenue forecast drift is distributed and slow: a few percentage points off commit here, a slightly inconsistent pipeline stage classification there. By the time it surfaces, it's already been compounding for quarters.

The lesson isn't that AI can't be used for high-stakes financial decisions. Zillow's competitors Opendoor and Offerpad were running similar AI models and fared significantly better. Why? They had built processes to detect model drift and adjust. The difference was the architecture around the technology.

The RevOps Use Cases Where LLMs Earn Their Place

None of this means LLMs are the wrong tool for revenue teams. It means teams need to be honest about which jobs they're suited for.

LLMs genuinely excel at tasks that require judgment, pattern recognition, and the ability to synthesize messy, unstructured information. In a RevOps context, that looks like:

- Deal risk detection: Reading across call transcripts, email threads, and CRM activity to flag deals that feel stalled—even when the stage hasn't moved and the rep is still optimistic.

- Anomaly detection: Identifying when a trend looks unusual against historical patterns, then flagging it for a human to investigate.

- Narrative generation: Turning structured pipeline data into plain-language summaries for QBRs or board updates.

- Qualitative signal processing. Extracting intent, urgency, and competitive signals from unstructured text that no rule-based system could parse.

These are tasks where some variance is acceptable, even useful. You want the model to catch something a human might miss. You don't need it to catch it identically every time.

Then there are tasks you shouldn't hand to an out-of-the-box LLM: commit roll-ups, quota calculations, territory assignments, stage progression rules, revenue recognition logic, or any calculation that feeds a number into a board report.

How to Use LLMs on Deterministic RevOps Tasks

The question isn't "should we use AI in RevOps?" It’s “can we get an LLM to do reliable deterministic work?”

Our hypothesis at Chief is that you can, with one caveat: you need significant engineering behind it. That means having three things in place:

- Rigorous system prompts. The instructions given to a model need to be explicit, specific, and tested hard against edge cases. A vague prompt produces variable behavior. A well-engineered prompt—one that defines the exact logic the model should follow, the data it should reference, and the format it must return—dramatically reduces variance.

- Strict instructional guardrails. The model needs boundaries. Not just what to do, but what it must never do. No inferring. No rounding. No "close enough." In revenue contexts, the model should be explicitly prevented from making judgment calls that belong to deterministic rules.

- A governed data layer. The model needs to be connected to clean, structured data. Its outputs need to be validated against expected ranges before they flow downstream. Human review checkpoints need to be built into the workflow at moments that matter most. This is where CRM hygiene goes from housekeeping problem to AI performance problem.

This is not an out-of-the-box LLM experience. It's not science fiction either. It's just good engineering.

A Practical Framework for AI Deployment

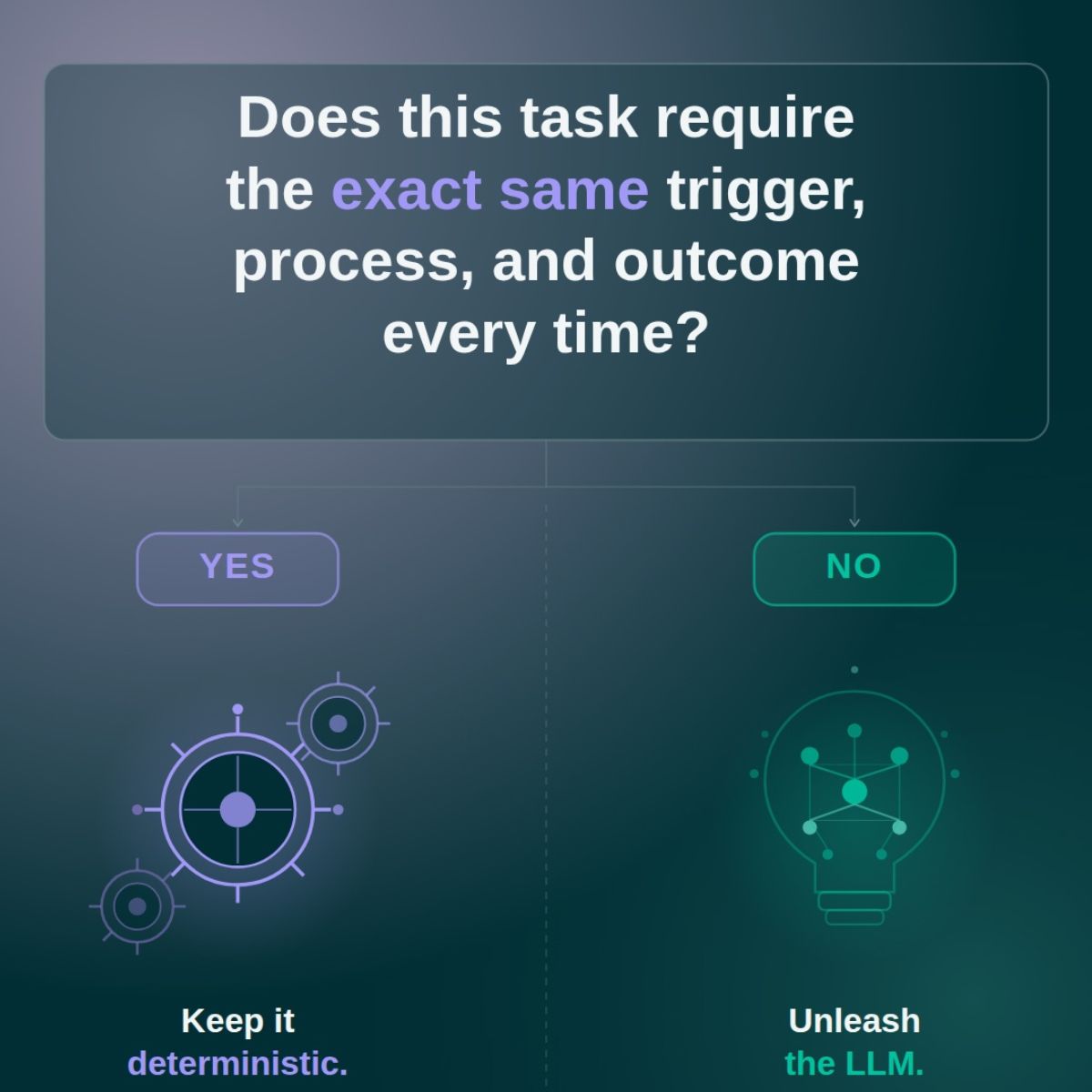

Before deploying any AI tool into your revenue workflow, ask one question:

Does this task require the exact same trigger, process, and outcome every time?

If yes, keep it deterministic. Build it on rules, structured logic, or a carefully engineered AI system with hard guardrails. Don't use a general-purpose LLM and hope for consistency.

If not, this is where an LLM earns its place. Pattern recognition, anomaly detection, qualitative signal processing, narrative generation. These are tasks where the model's probabilistic nature is a feature, not a bug.

The teams getting this right in 2026 make this distinction early. They're not anti-AI; they're precise about which job they're hiring AI to do.

We built this principle directly into Chief's Assignments (preconfigured workflows with the system prompt, guardrails, and data connections locked in). For example, Chief runs a Deal Risk Analysis for me every morning. It flags stale deals, single-threaded opportunities, and close date mismatches—using the same logic every time.

If you want to see how we approach deterministic RevOps tasks in Chief, request a demo.

.svg)